On this page

Star History Monthly Apr 2024 | Open Source Prompt Engineering Guides & Tools

Prompt Engineering is to design and refine prompts or instructions given to a language model, in order to elicit desired responses. To make the most of the language model's capabilities while ensuring the responses are accurate and tailored to their needs, you often need to carefully craft the input provided to the model to guide its output towards specific objectives.

For the selections, we divided them into two sections: The guides which will take you through the basics of prompt engineering, and the tools for managing your prompts.

The Guides

Prompt Engineering Guide

Prompt Engineering Guide is the holy grail of all guides, aiming to make it easier to stay up-to-date with prompt engineering guides, techniques, applications and papers. If you are getting started, this is an excellent place to start.

Since going live at the end of 2022, Prompt Engineering Guide has grown to support 13 languages, its courses have educated over 3M learners, and they have put together a 1-hour lecture that provides an overview of prompting techniques, applications, and tools.

Awesome-GPTs-Prompts

Awesome-GPTs-Prompts is a curated list of prompts from the top-rated GPTs so that you can unlock the magic behind large language models more easily.

The curated list includes GPTs from the official GPT Store, prompts from the community, and some interesting resources on basic prompt engineering, how to prompt attack and prompt protect.

Prompt Management

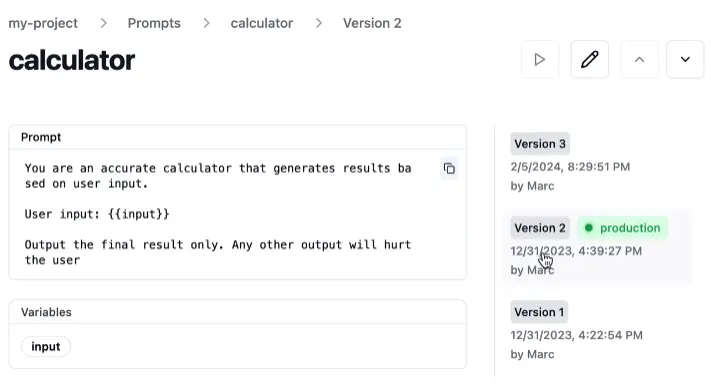

Langfuse

Once you have the basics of prompt engineering down, it's time to manage and test your prompts so that they are on their A-game.

Langfuse is an open-source LLM engineering platform that helps teams collaboratively debug, analyze, and iterate on their LLM applications.

With it, even non-tech users can manage, version and deploy prompts. It's also pretty straightforward to rollback to a previous version of a prompt (we all make mistakes😬).

And there's more to it: tracing, monitoring, testing are also part of the Langfuse platform.

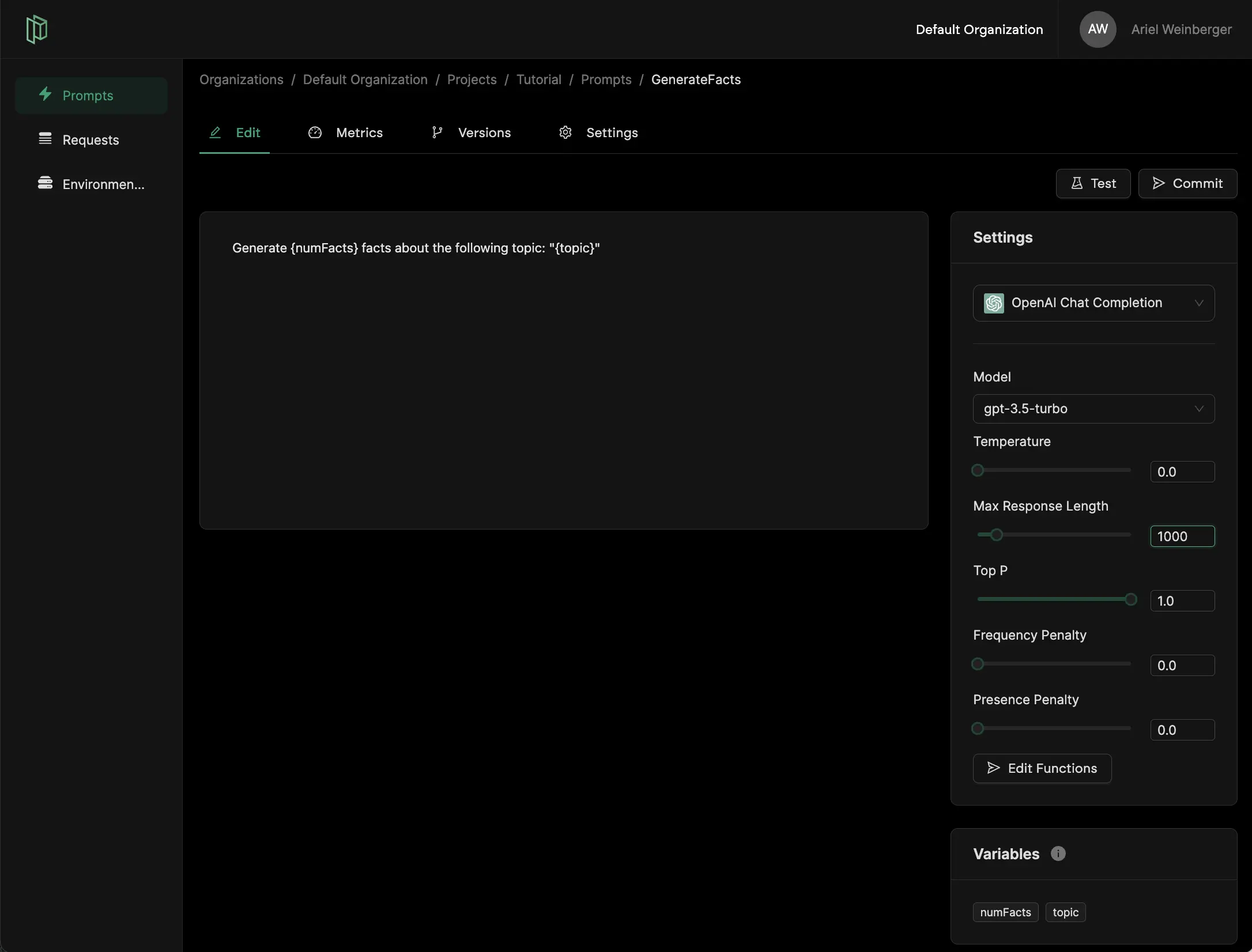

Pezzo

Pezzo is a cloud-native LLMOps platform. You can observe and monitor your AI operations, troubleshoot issues, collaborate and manage your prompts in one place, and instantly deliver AI changes.

With Pezzo, you have a centralized place for prompt management:

- Design, testing and versioning of prompts

- Instant prompt deployments

- Observability including prompt execution history, stats and metrics

- Troubleshooting to resolve issues with prompts

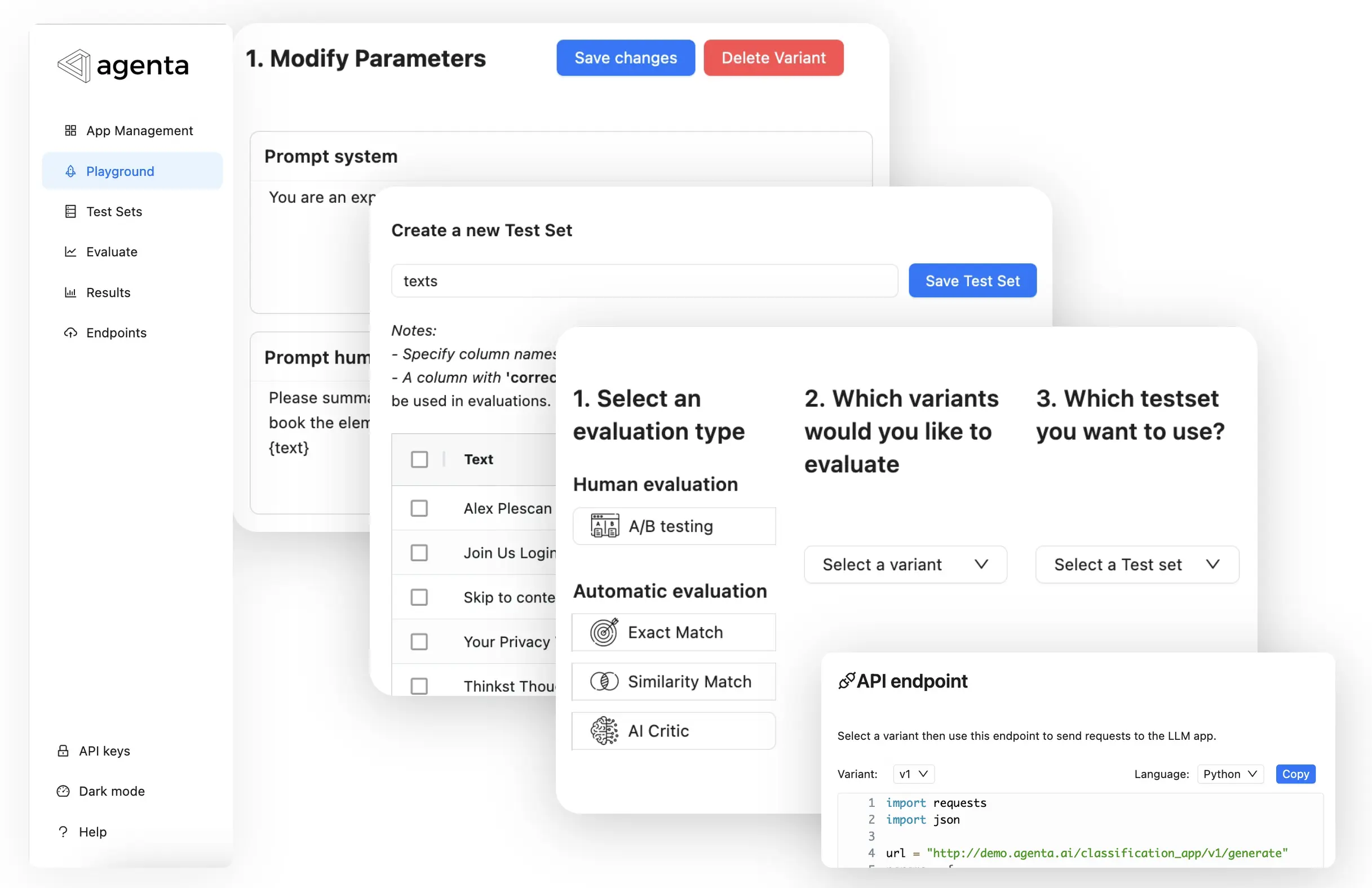

Agenta

Agenta is an end-to-end LLMOps platform. It provides tools for prompt engineering and management, evaluation, human annotation, and deployment.

For Agenta, prompt engineering is taken very seriously, and they have different ways of evaluating, experimenting and comparing your prompts.

You can either use a set of built-in evaluators that can be configured, loading test sets, or simply compare variants side by side where you can view the results of multiple variants simultaneously.

Lastly

More companies than ever have launched their own AI tools, as simple as a chatbot to automation, or project management tools. As McKinsey puts it, "Prompt engineering is likely to become a larger hiring category in the next few years".

Obtaining desired results is not that complicated, but it requires practice to provide specific, clear instructions to the computers. We have a great list for you, get started playing🤸.

📧 Subscribe to our weekly newsletter here.